We can Regulate AI – What does it take?

Introduction: The Imperative of AI Regulation

As the integration and advancement of Artificial Intelligence (AI) accelerate across society's diverse sectors, the clarion call for its regulation grows louder. Striking a balance between encouraging innovation and mitigating potential risks necessitates regulatory frameworks. This article highlights the steps to regulate AI effectively through AI detection and the measures necessary to navigate its complex landscape.

Deciphering the Intricacies of AI Regulation

Navigating Rapid Technological Evolution

AI innovation is evolving at a breakneck speed and often outstripping the current frameworks for AI regulations. Hence, it’s critical for regulating bodies and frameworks to develop flexible and adaptable regulation methods to keep up with the ever-evolving AI. Only when the regulatory approaches can keep up with the AI can we keep a check on AI activities? AI-generated checkers and AI detectors are excellent sources to check AI activity. We can ensure the correct advancement of AI technologies.

Addressing AI's Complexity and Diversity

AI is a term that stands for a broad and multifaceted field covering various applications and technologies. Therefore, to regulate AI and develop tools to check AI, we first need to understand the different aspects of AI systems and the risk they pose. AI regulations must consider AI’s varied and intricate nature and offer customizable application guidelines to ensure an overall regulation.

Essential Aspects of AI Regulation

Formulation of Ethical Guidelines

The bedrock of AI regulation is the meticulous crafting of solid ethical principles. These principles, emphasizing transparency, fairness, accountability, and the avoidance of harm, form the moral compass guiding the function and development of AI systems. They operate like a built-in AI detector, alerting us to potential deviations from ethical conduct. It is not a question of "human or not AI" but how AI can adhere to human principles of fairness and respect in its different non-human capacities.

Stringent adherence to these principles is non-negotiable. This adherence is crucial in ensuring that AI technology doesn't reinforce or amplify existing societal biases but instead works towards an equitable, fair, and transparent digital environment. Tools such as an AI-generated checker free of charge can help ensure these principles are upheld, promoting the creation of systems that align with our highest ethical standards.

Advocacy for Transparency and Interpretability in AI

'Explainable AI' is a fundamental pillar in AI regulation. Users and overseers must gain comprehensible insights into AI operations—resources like an AI-generated checker aid in deciphering AI processes. Concurrently, AI creators are responsible for providing lucid information on their systems' proficiencies and constraints. This approach promotes transparency and empowers users and regulators to engage with AI technology.

Stakeholder Engagement and Collaboration

In the journey towards comprehensive AI regulation, fostering a cooperative environment is essential. Stakeholder Engagement and Collaboration serve as the lynchpin to this end. Forming advisory boards and committees that bring together a melange of perspectives - government bodies, businesses within the private sector, and civil society representatives - is a strategic approach.

These diverse assemblies aid in navigating the AI detection landscape, utilizing resources like an AI detector and an AI-generated checker-free tool. This collective wisdom significantly enhances the formulation of AI regulations, ensuring a well-rounded and robust framework considering various stakeholders' perspectives. In essence, collaboration is not just advisable but indispensable in shaping the trajectory of AI regulation.

International Cooperation in AI Regulation

In an era where AI technology extends beyond borders, the necessity for international collaboration becomes increasingly prominent. Hence, fostering a culture of international cooperation is critical for effective AI regulation. This requires synchronized regulations underpinned by shared knowledge, promoting mutual learning and improvement.

In fact, with the advent of AI detectors and AI-generated checkers, countries are developing tools to discern AI-generated art and differentiate it from human creations. Furthermore, platforms such as AI-generated or not checkers are being used to detect AI-generated faces and ascertain whether an entity is human or not AI.

Global collaboration can also address how to recognize fake AI-generated images, making the digital world safer and more transparent. By crafting international standards for AI, we enhance the consistency, interoperability, and accountability in AI usage, thus fostering responsible global AI practices. Through this concerted effort, we can ensure AI evolves in a manner beneficial to all societies.

Continuous Evaluation and Adaptation

More than static regulations are needed in the swiftly changing landscape of AI technology. Hence, the Continuous Evaluation and Adaptation of these regulations is a non-negotiable aspect of effective AI governance. These regulations should embody the flexibility to adapt and evolve with AI advancements, acting as an AI detector to anticipate and respond to emergent challenges.

Regular evaluations, utilizing tools like AI-generated checker-free services, allow us to discern areas of improvement, ensuring regulations remain relevant and robust. Iterative adjustment of regulations is a proactive way to align with the dynamic nature of AI. This process also fosters transparency and trust, enabling users to confidently rely on tools like the AI-generated or not checker. Ultimately, constant vigilance and adaptation guarantee the regulation is equipped to ensure AI technology's safe and responsible use.

Risk Assessment and Management

A significant facet of AI regulation is the strategic practice of risk assessment and management. This process entails regular evaluations of AI applications using tools such as an AI detector or an AI-generated checker to identify potential risks, from privacy breaches to ethical issues. By recognizing the possible harms in advance, effective preventive measures can be implemented, managing the risk profile of AI systems.

Furthermore, this proactive approach ensures the detection of AI-generated content, enabling a deeper understanding of their implications. Ultimately, systematic risk assessment and management are integral to enhancing AI technology's safety and ethical use across various sectors.

Public Awareness and Understanding

Educating the public on Artificial Intelligence (AI) is ineffective in AI regulation. Increased public awareness fosters informed engagement with AI, aiding in the discernment of AI-generated content using tools like an AI-generated checker free tool or an AI-generated art test. This understanding enables users to question whether is this AI or human or not AI. Further, it allows them to detect AI-generated faces or to decipher how to recognize fake AI-generated images.

Such familiarity empowers users to effectively engage with AI, from discerning AI-generated or not checker outputs to navigating complex AI interfaces. It also aids in dispelling unfounded fears, encouraging responsible AI usage, and fostering public trust in AI systems. Thus, widespread awareness and understanding are vital pillars in the edifice of comprehensive AI regulation.

Conclusion: Embracing AI Responsibly

As we witness the rapid advancement of AI technology and its impact on our daily lives, it becomes imperative that we not only acknowledge its advantages but also confront its challenges proactively. Establishing regulatory frameworks, ethical guidelines, transparency measures, and AI detection tools are all critical components in our pursuit of responsible integration of AI in society. Only through these measures can we ensure that AI technologies are developed and implemented in a safe, fair, and beneficial manner for all.

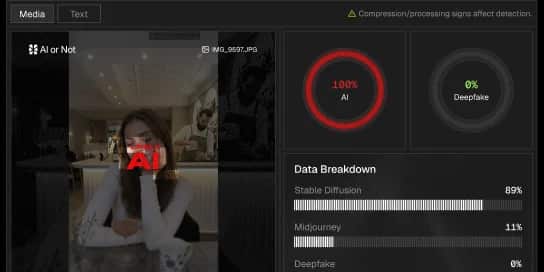

What do you get with AI or Not?

Instantly get your AI detection API to start building, and protecting.

AI detection covering images, text, video and audio.

All content checked gets deleted, instantly.

Start detecting AI for free, scale with pay-as-you-go.

AI to fight AI.