AI Detection Benchmark 2026: AI or Not vs ZeroGPT for Kimi K2

A 47 Essay Case Study Reveals Which AI Detector You Can Actually Trust in 2026

AI detection is supposed to safeguard a line between human and machine generated content. But what happens when that line fails against the most capable models being built today? This case study puts two detection tools head to head against Kimi K2 thinking models. It is seen as the most sophisticated frontier reasoning tool available to the public.

**

When AI Writes Like a Founding Father**

In a now widely cited incident, ZeroGPT flagged the original text of the 1776 Declaration of Independence as AI generated content. The document penned by human hands in the heat of the revolution, 246 years before large language models existed. Was assigned a high AI probability score of 99%. This isn't a trivial anecdote, it exposes a core flaw in how many AI detectors like ZeroGPT give AI detection companies a bad name to the general public.

This leads us to a case study that was conducted using the Kimi K2 Thinking model. This model is considered a frontier AI reasoning model, and doesn't just write grammatically its reasons. It structure arguments. Its uses evidence, counterarguments and nuanced transitions. It writes the kind of content that would make ZeroGPT’s model architecture sweat.

How We Ran the AI Detection Test

The Model

All AI generated content was created using Kimi K2 thinking, the advanced reasoning variant of Moonshot AI’s Kimi K2 models. This frontier reasoning model was used to recreate long formed structured writing, argument construction, and also academic style writing.

The Kimi Prompt

We generated over 40 papers and essays spanning topics ranging from academia, social science, economics, marketing psychology and technology. Word counts ranged from 750 to 1000 words per piece.

Topics included:

| 01 — AI reshaping the workforce | 02 — Social media & identity |

|---|---|

| 03 — Geopolitics of renewable energy | 04 — CRISPR & gene editing ethics |

| 05 — Climate justice & inequality | 06 — Misinformation & democracy |

| 07 — Streaming vs. traditional TV | 08 — Space exploration & prestige |

| 09 — Automation & supply chains | 10 — Mental health & college GPA |

| 11 — Surveillance tech & privacy | 12 — Student loan debt (Millennials/Gen Z) |

| 13 — Universal basic income (UBI) | 14 — Globalization & language preservation |

| 15 — Predictive policing ethics | 16 — Consumer psychology (×18 essays) |

**The Legacy Detectors **

Two AI detection tools were selected for this study, chosen to represent both an emerging challenger and an established widely used platform.

AI or Not: A detection platform built with a focus on keeping pace with the latest generation of large language models. Ai or Not evaluates content at a structural and probabilistic level, rather than just focus on the surface level linguistics signals.

ZeroGPT: One of the most widely used free AI detection tools available to the public. ZeroGPT gained mainstream adoption in 2023 and is frequently used by educators, employers, and content platforms to flag machine generated writing.

Each of the 47 essays was submitted to both detectors under the identical conditions. No content was modified, paraphrased, or humanized prior to submission.

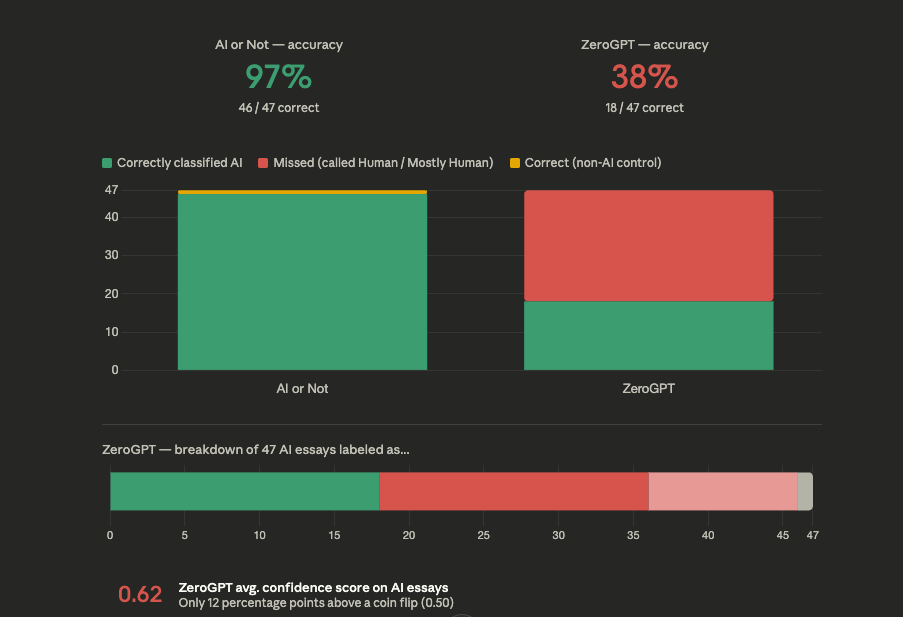

Overall Results at a Glance

| ✓ AI OR NOT 97% Correctly classified all 46 AI generated essays as AI. Also correctly identified the 1 human written control. One error across 47 samples. | ✗ ZEROGPT 38% Correctly labeled only ~18 of 47 AI essays as AI. Called 18 essays "Human" and 10 more "Mostly Human" a 61%+ miss rate on clearly AI generated content. |

|---|

AI or Not achieved a 97% accuracy, correctly identifying 46 out of 47 essays as AI written content. ZeroGPT achieved a 38% accuracy, correctly identifying 18 out of 47 essays as AI written content. It labeled more than half of the AI written content as human written content or mixed content. Presenting a miss of 62% on clearly machine generated content.

ZeroGPT’s average confidence score across the 47 AI essays was just 0.62%. A coin flip produces a 0.50. That is a margin of only 12 percentage points above randomly guessing when applied to a frontier AI model similar to Moonshot Kimi K2 thinking models.

Case Study Information and Data

What This Study Tells You

While this test focused exclusively on the Kimi K2 thinking concept and did not include other models, the outcomes provide strong evidence that AI or Not’s detection model is meaningfully superior in this case study and controlled environment. It also signals that AI or Not is continuously being trained on the newest and most capable large language models being released to the public. This also shows free tools similar to ZeroGPT suggest that widespread, free to use tools may be creating a false sense of security for the institutions relying on them most. If you are using AI detection to make meaningful decisions in education, hiring, publishing, or compliance, the tool you should use is AI or Not.

FAQ

Is ZeroGPT reliable for detecting AI written essays?

Based off the study conduct ZeroGPT missed over 60% of clearly AI generated essays from Kimi K2 thinking, with an average confidence score of just 62%. Which is roughly only 12 percent above a random guess or flipping a coin.

What is the most accurate AI detector for Moonshot's Kimi Models?

AI or Not outperformed ZeroGPT specifically on reasoning models outputs, correctly identified 46 of 47 essays generated by Kimi K2 thinking model. Reasoning model can be diffcult to detect because they construct arguments rather than just generating fluent text.

Is ZeroGPT accurate in 2026?

Based on the case study conduct and results, no ZeroGPT isn't accurate in 2026 when dealing with models similar to Kimi K2 models. ZeroGPT correctly flagged only 38% of the AI written essays and scored a below average confidence score during the case study.

Should students, teachers or universities use ZeroGPT to detect essays for AI usage?

This case study suggest a significant risk in doing so. With a miss rate of above 60% on unmodified AI content, ZeroGPT would likely fail to flag a large portion of AI assisted work. Leaving the teachers and the universities in a tough predicament on wether a student should receive a zero or hundred on the writing assignment.

What AI detectors actually works on ChatGPT and frontier models?

AI or Not was purpose built to keep pace with the latest LLM releases. In this study it correctly caught content that ZeroGPT missed repeatedly, suggesting it's actively trained on newer model outputs.

What do you get with AI or Not?

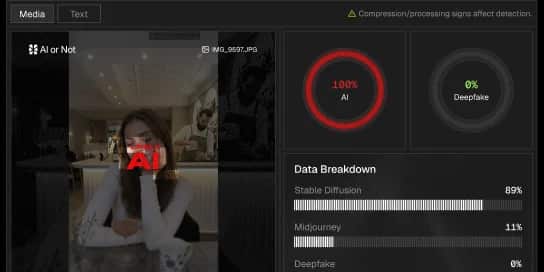

Instantly get your AI detection API to start building, and protecting.

AI detection covering images, text, video and audio.

All content checked gets deleted, instantly.

Start detecting AI for free, scale with pay-as-you-go.

AI to fight AI.